Discuss Scratch

- Discussion Forums

- » Advanced Topics

- » Non-photorealistic rendering projects

![[RSS Feed] [RSS Feed]](//cdn.scratch.mit.edu/scratchr2/static/__9c6d3f90ec5f8ace6d3e8ea1e684b778__//djangobb_forum/img/feed-icon-small.png)

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

One of them was dithered in marked-leading graphics software, one was dithered in a programming environment for children. Can you guess which one is which?

XD!!!

- PullJosh

-

Scratcher

Scratcher

1000+ posts

Non-photorealistic rendering projects

nothing is permanent

Last edited by PullJosh (June 14, 2016 14:04:16)

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

The undithered reference grey is 180,180,180? Photoshop is not even close.Yes. But to be fair, in Scratch I used 180.31, else It would have messed up the perfect checker board

. But this is not what makes the difference.

. But this is not what makes the difference.This sounds like a trick question, so I'm going to say that the one on the left was for children.Nope, left is Photoshop CS6, right is my modified Floyd-Steinberg Dithering

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

So what else is broken? Convert to grey should be sqrt((r^2)* 0.21 + (g^2)*0.72 + (b^2)*0.07)?

I'll check whether there is any difference in practice.

I'll check whether there is any difference in practice.

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

So what else is broken? Convert to grey should be sqrt((r^2)* 0.21 + (g^2)*0.72 + (b^2)*0.07)?

I'll check whether there is any difference in practice.

Yes, it also looks incorrect. The color blue (0,0,255) would be as bright as (18,18,18) according to the original formula. If you check this two colours out, you'll notice that blue is much brighter.

However, if you modify it, you can't use (0.21,0.72,0,07) anymore. I think those numbers are based on tests on humans. If you correct the formula, you have test it on humans again to get the corrected factors.

I wonder, how a simple average turns out, if you do it correctly.

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

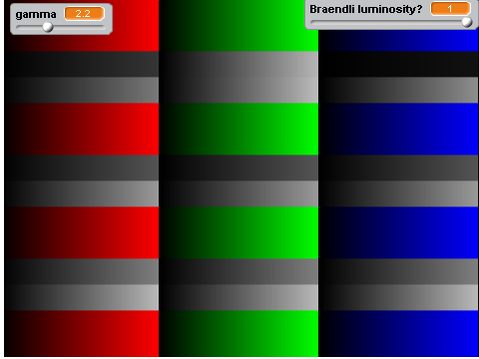

The original looks best I think. I have three methods with a reference stripe between each.

shown top to bottom:

1=“corrected”=sqrt((r^2)* 0.21 + (g^2)*0.72 + (b^2)*0.07)?

2=original=0.21R + 0.72G + 0.07B.

3=corrected average=sqrt((r^2+g^2+b^2)/3)

shown top to bottom:

1=“corrected”=sqrt((r^2)* 0.21 + (g^2)*0.72 + (b^2)*0.07)?

2=original=0.21R + 0.72G + 0.07B.

3=corrected average=sqrt((r^2+g^2+b^2)/3)

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

dupe

Last edited by Layzej (June 14, 2016 17:22:20)

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

Here's a different test pattern. On this one the corrected luminosity looks better (I think) for red and blue.

I should add corrected/uncorrected lightness method ((max(R, G, B) + min(R, G, B)) / 2) and uncorrected average method for reference.

I should add corrected/uncorrected lightness method ((max(R, G, B) + min(R, G, B)) / 2) and uncorrected average method for reference.

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

What I didn't know till today, is that this square-root thing isn't fix. It depends on the hard- and software, that created the image, and the monitor displaying it. Its called gamma (heard of it, only knew that it has something to do with brightness curves). So the real formula for average should be

With gamma set to 2, we get the square / square-root formula.

Gamma is usually between 1.8 and 2.2, so if we don't know the gamma of an image (and the monitor), gamma=2 is a good guess. For Windows and most Monitors, 2.2 is the standard gamma.

I found this page useful to calibrate my monitor.

averageR=((r1^gamma+r2^gamma)/2)^(1/gamma)

With gamma set to 2, we get the square / square-root formula.

Gamma is usually between 1.8 and 2.2, so if we don't know the gamma of an image (and the monitor), gamma=2 is a good guess. For Windows and most Monitors, 2.2 is the standard gamma.

set[gamma v] to [2.2]

set[r1^gamma v] to ([e^ v] of ((gamma) * ([ln v] of ([red v] of [colour1 v]))))

set[r2^gamma v] to ([e^ v] of ((gamma) * ([ln v] of ([red v] of [colour2 v]))))

set [averageR v] to ([e^ v] of (((1) / (gamma)) * ([ln v] of ((r1^gamma) + (r2^gamma)))))

I found this page useful to calibrate my monitor.

Last edited by MartinBraendli2 (June 14, 2016 20:33:14)

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

What I didn't know till today, is that this square-root thing isn't fix. It depends on the hard- and software, that created the image, and the monitor displaying it. Its called gamma (heard of it, only knew that it has something to do with brightness curves). So the real formula for average should be

averageR=((r1^gamma+r2^gamma)/2)^(1/gamma)

Nuts. Here's the three greyscale conversion methods using the sqrt fix: https://scratch.mit.edu/projects/113731850/

I'll circle back with the gamma fix.

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

What I didn't know till today, is that this square-root thing isn't fix. It depends on the hard- and software, that created the image, and the monitor displaying it. Its called gamma (heard of it, only knew that it has something to do with brightness curves). So the real formula for average should be

averageR=((r1^gamma+r2^gamma)/2)^(1/gamma)

Ok. Fixed. The second of each of the three groups is the gamma corrected version. The top uses luminosity method (0.21 R + 0.72 G + 0.07 B), the middle is average (r+g+b)/3, and the bottom is lightness method (max+min/2).

The difference between gamma=2 and gamma=2.2 is pretty subtle:

Last edited by Layzej (June 14, 2016 23:27:24)

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

So what else is broken? Convert to grey should be sqrt((r^2)* 0.21 + (g^2)*0.72 + (b^2)*0.07)?er, if you modify it, you can't use (0.21,0.72,0,07) anymore. I think those numbers are based on tests on humans. If you correct the formula, you have test it on humans again to get the corrected factors.

I'll check whether there is any difference in practice.

Assuming, the following graphic is correct:

You can read out, how much each wavelenght would activate rhodopsin (which determines brightness in rod cells).

In parenthesis is the normalized absorbtion, so that the 3 add up to 1.0

So theoretically, this should convert rgb to grayscale:

grayscale = round( ( 0.2*r^y + 0.52*g^γ + 0.28*b^γ )^(1/γ) )

- Layzej

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

grayscale = round( ( 0.2*r^y + 0.52*g^γ + 0.28*b^γ )^(1/γ) )

Yes. That looks better. I've added a switch to toggle between the two.

Last edited by Layzej (June 16, 2016 19:22:32)

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

So theoretically, this should convert rgb to grayscale:grayscale = round( ( 0.2*r^y + 0.52*g^γ + 0.28*b^γ )^(1/γ) )

I just ran a test, comparing different rgb to grayscale comparison models. Its hard to compare them, so i made a project, that flickers between the (coloured) original and the converted grayscale. The better the conversion, the less flickering you should notice. In my opinion, my model (i call it rhodopsin-gamma) is clearly the winner.

Edit: I just ran the project on my other screen, where the results are not so clear (close race between luminosity and rhodopsin-gamma)

Edit2: I now added Photoshop (Edit>Adjustments>Desaturate), which also seams to be worse than rhodopsin-gamma.

Last edited by MartinBraendli2 (June 16, 2016 20:19:49)

- NickyNouse

-

Scratcher

Scratcher

1000+ posts

Non-photorealistic rendering projects

Seconded, it's close but rhodopsin-gamma seems to winSo theoretically, this should convert rgb to grayscale:grayscale = round( ( 0.2*r^y + 0.52*g^γ + 0.28*b^γ )^(1/γ) )

I just ran a test, comparing different rgb to grayscale comparison models. Its hard to compare them, so i made a project, that flickers between the (coloured) original and the converted grayscale. The better the conversion, the less flickering you should notice. In my opinion, my model (i call it rhodopsin-gamma) is clearly the winner.

Edit: I just ran the project on my other screen, where the results are not so clear (close race between luminosity and rhodopsin-gamma)

Edit2: I now added Photoshop (Edit>Adjustments>Desaturate), which also seams to be worse than rhodopsin-gamma.

- gtoal

-

Scratcher

Scratcher

1000+ posts

Non-photorealistic rendering projects

have you calibrated your monitors?

- MartinBraendli2

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

have you calibrated your monitors?I know both have roughly 2.0.

I have recently updated to Win 10 (big mistake), and my Graphics Card has no driver for Win 10. I have to go back to win 7 to calibrate it via ATI Catalyst Control Center. But at the moment, this colour conversion problem wont let me leave the PC for however long it takes to go back to Win 7.

- gtoal

-

Scratcher

Scratcher

1000+ posts

Non-photorealistic rendering projects

have you calibrated your monitors?I know both have roughly 2.0.

I have recently updated to Win 10 (big mistake), and my Graphics Card has no driver for Win 10. I have to go back to win 7 to calibrate it via ATI Catalyst Control Center. But at the moment, this colour conversion problem wont let me leave the PC for however long it takes to go back to Win 7.

Wait till you get into this stuff seriously, then the costs start rocketing up :-) … amzn.com/B006TF37H8

- gtoal

-

Scratcher

Scratcher

1000+ posts

Non-photorealistic rendering projects

I didn't realise this was a thing, but apparently drawing using ellipses as a primitive is something that people do…

https://scratch.mit.edu/studios/1930245/

I noticed it on the front page today, it's probably been there for weeks and I never looked…

G

PS And you may remember from last year that we have an ellipse-drawing library… https://scratch.mit.edu/projects/50039326/

https://scratch.mit.edu/studios/1930245/

I noticed it on the front page today, it's probably been there for weeks and I never looked…

G

PS And you may remember from last year that we have an ellipse-drawing library… https://scratch.mit.edu/projects/50039326/

- GRA0007

-

Scratcher

Scratcher

100+ posts

Non-photorealistic rendering projects

I didn't realise this was a thing, but apparently drawing using ellipses as a primitive is something that people do…Ooh, this is kinda like the effect I was trying to get with my recent project, but I wasn't looking at the colours of the image, just the shade. If you looked at the colour of the pixels around a point to get an average maybe that would help to remove pixels that are in the wrong spot? Sorry this isn't really what you were hinting at with the ellipses but it's kinda close.

https://scratch.mit.edu/studios/1930245/

I noticed it on the front page today, it's probably been there for weeks and I never looked…

G

PS And you may remember from last year that we have an ellipse-drawing library… https://scratch.mit.edu/projects/50039326/